Natural Language Processing (NLP) is a subfield of artificial intelligence (AI) that deals with the interaction between computers and human languages. NLP is used to build applications such as speech recognition, natural language understanding, sentiment analysis, and machine translation.

The evolution of natural language processing

Natural Language Processing (NLP) has undergone significant evolution over the years, driven by advancements in technology and an increased need for the ability to process and understand human language.

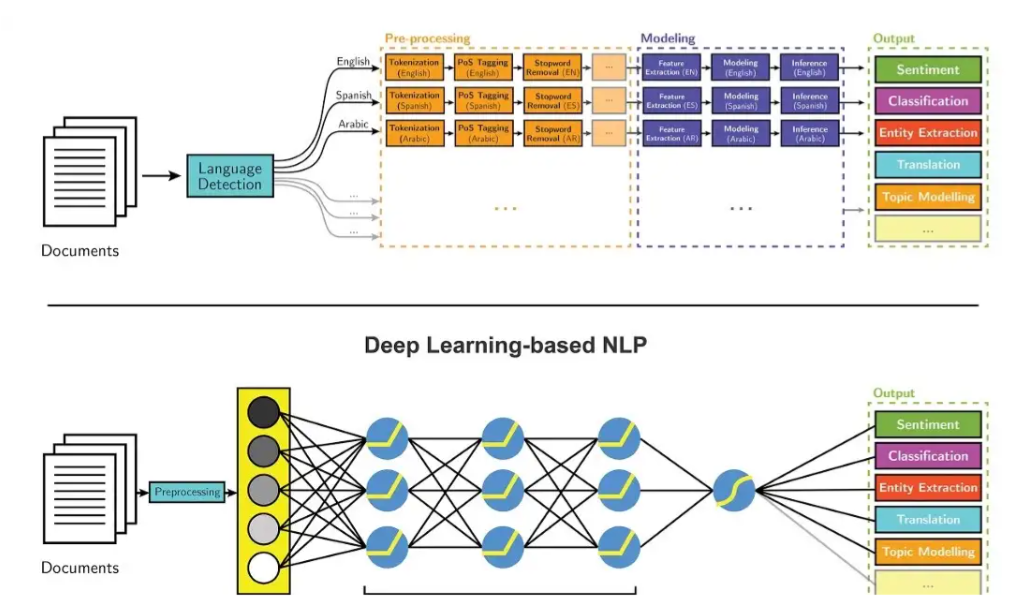

In the early days of NLP, the focus was on rule-based methods, where the technology used predefined rules to analyse and understand the text. These methods were limited in their ability to understand the nuances of human language and could have been more effective in handling large amounts of unstructured data.

With the advent of machine learning in the 1990s, NLP began to shift towards statistical methods. These methods used algorithms to learn from large amounts of labelled data and make predictions about new data. This significantly improved NLP tasks such as speech recognition and machine translation.

Deep learning and neural networks have become increasingly popular in NLP. These methods have improved tasks such as language translation and text generation. The use of deep knowledge in NLP has been driven by the availability of large amounts of labelled data and the ability to train large neural networks.

Additionally, the field of NLP is also being impacted by advancements in other areas, such as knowledge graphs and transfer learning. The use of knowledge graphs to model the relationships between entities in text and transfer learning, where pre-trained models are fine-tuned to specific tasks, has further improved the performance of NLP tasks.

Techniques and methods of natural language processing

Natural Language Processing (NLP) uses several techniques and strategies to analyse and understand human language. Some of the most common procedures include:

- Tokenisation: Tokenization is breaking down a sentence or paragraph into individual words or phrases, called tokens. This is the first step in most NLP tasks and helps to make the text more manageable for further processing.

- Part-of-Speech Tagging (POS): Part-of-Speech tagging is the process of identifying the grammatical role of each word in a sentence. This can include identifying nouns, verbs, adjectives, and adverbs.

- Named Entity Recognition (NER): Named Entity Recognition identifies and classifies named entities in text, such as people, organisations, and locations.

- Parsing: Parsing analyses a sentence or text to identify its grammatical structure and relationships between words. This can include identifying phrases, clauses, and dependencies between words.

- Sentiment Analysis: Sentiment analysis determines the emotional tone of a piece of text, such as whether it is positive, negative, or neutral.

- Stemming and Lemmatization: Stemming and lemmatisation are techniques used to reduce words to their base or root form, which can help with text analysis and improve the performance of NLP algorithms.

- Machine Learning: Machine learning techniques such as supervised and unsupervised learning, deep learning and neural networks are widely used in NLP to train models to perform tasks such as language translation, text generation and language understanding.

- Knowledge Graphs: Knowledge Graphs are used to model and represent the relationships between entities and concepts in natural language text.

The field of NLP is constantly evolving, with new techniques and technologies being developed regularly. Advancements in deep learning and neural networks have significantly improved NLP tasks such as language translation, text generation and understanding.

NLP can improve efficiency and accuracy in various industries by automating tasks that were previously done manually and providing insights that were previously difficult or impossible to obtain. NLP is also being used to create chatbots and other conversational interfaces, which are becoming increasingly popular for customer service and other applications.

Why is natural language processing important?

Natural Language Processing (NLP) is essential for several reasons:

- Improved communication: NLP allows computers to understand and communicate in human language, which is crucial to creating more natural and human-like interactions with technology. This can enhance communication between humans and computers, making it easier for people to interact with technology in their everyday lives.

- Automation of tedious tasks: NLP can automate tasks previously done manually, such as data entry, text summarisation, and sentiment analysis. This can improve efficiency and accuracy in various industries.

- Insight generation: NLP can provide insights that were previously difficult or impossible to obtain, such as identifying patterns and trends in large amounts of unstructured data, such as social media posts, customer feedback, and research papers.

- Personalisation: NLP can be used to personalise products and services for individual customers. It can be used to understand customer preferences and behaviour and to provide personalised recommendations.

- Advancements in other technologies: NLP also plays a crucial role in improving other technologies, such as virtual assistants, chatbots, and self-driving cars, by allowing them to understand and respond to human language.

- Accessibility: NLP can also be used to make technology more accessible for people with disabilities, such as speech recognition for people with mobility impairments or text-to-speech synthesis for people with visual impairments.